Final WordsĪs you may have noticed, we pulled these slides from a number of different CXL sessions in the workshops today. One can use lower-cost DDR3, higher-cost HBM, or other types of memory so even the types of CXL attached memory may have different performance profiles. There is still a lot of work that will go into making that a reality, but it is work that will need to happen. HC34 Compute Express Link CXL Memory Transparent Tiered The idea is that memory pages can either be hot or cold and by monitoring the usage and then adjusting placement accordingly, it can best take advantage of different tiers of memory. Meta discussed how it is looking at using tiered memory in its infrastructure to save on memory costs. HC34 Compute Express Link CXL Memory Tiered Memory Again, below note the “CXL <= NUMA socket-to-socket latency” line that is similar to what we have discussed before and is in another presentation above. The net result is that folks are now starting to talk about tiering memory, and mechanisms to utilize memory with different bandwidth and latency characteristics. Finally, we can have more memory that sits outside of a system and has even higher latency. We can then have CXL memory/ memory on other NUMA nodes that incur additional latency. In a system, we can have local memory on a host CPU or other device. That brings a really interesting challenge. HC34 Compute Express Link CXL 3 Fabric Latencies Here, as we get to a more disaggregated system architecture (that we covered in our CXL 3.0 piece), memory latencies can grow to 600ns. One of the other exciting aspects about CXL is the ability to use CXL fabric for scaling out the system. Flits here are being used similar to what folks would be more familiar with in the networking space (Flit is a FLow control un IT.) HC34 Compute Express Link CXL 3 Doubles Bandwidth With Same Latency Flit PCIe Gen6 PHYs add more bandwidth but CXL has some secret sauce that gets 2-5ns better latency due to how it crafts its Flits. We recently saw a XConn XC50256 CXL 2.0 Switch Chip and that is massive, but the CXL 3.0 versions are still some time away.

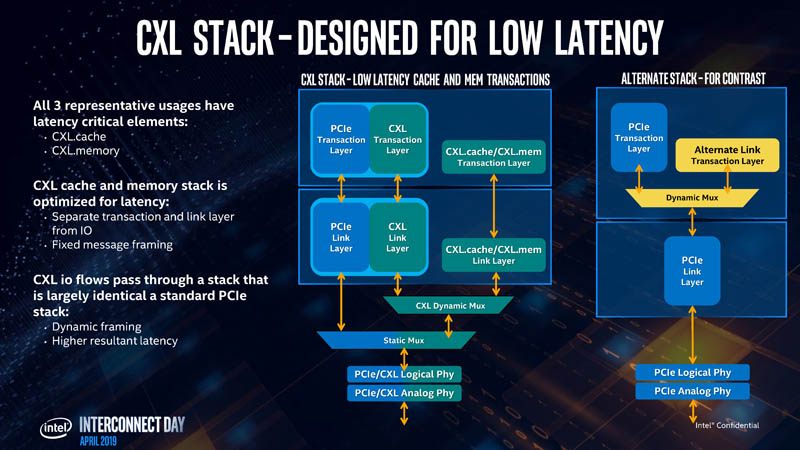

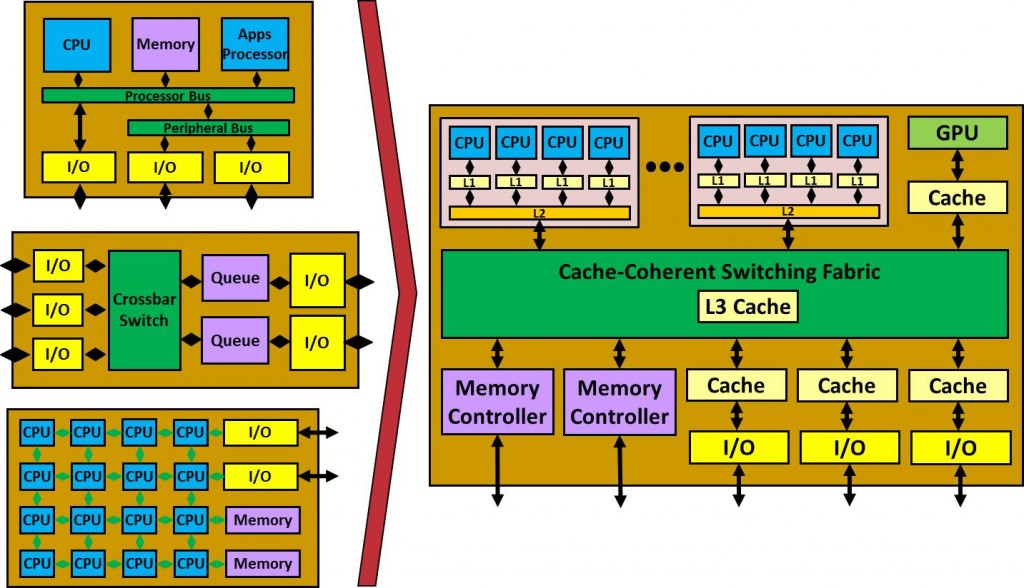

That is where we get things like a PCIe Gen6 PHY for twice the bandwidth and also fabric connectivity for multi-level switching using port-based instead of host-based switches. Since Optane turned into a tier that was cached with standard DDR4 memory, it ended up occupying a similar spot to where CXL is envisioned, except CXL benefits the entire ecosystem, not just Intel Xeon CPUs.īeyond CXL 2.0 is CXL 3.0. As we covered in Glorious Complexity of Intel Optane DIMMs and Micron Exiting 3D XPoint. Micron Computex 2021 Keynote Memory Pyramid The other Optane partner Micron replaced Optane with CXL as it backed out of the JV. Intel Optane DC Persistent Memory Pyramid Slide If CXL seems to be occupying a familiar spot, this is below DRAM and above NVMe SSDs, or exactly where Optane sat in Intel’s stack before the Intel Optane $559M Impairment with Q2 2022 Wind-Down. HC34 Compute Express Link CXL Memory Tiers And Latencies The CXL Consortium is using 80-140ns of latency for main memory and 170-250ns for CXL memory. The pyramid goes from registers and caches, out to memory, then to CXL, then to slower memory. HC34 Compute Express Link CXL Stack Designed For Low LatencyĪt Hot Chips 34, we got a new pyramid, but it is similar to what we have seen previously. At Hot Chips 34, we got some validation of that point.

The main goal, and what we have used as a rough guide, is that accessing memory over CXL should be roughly similar to the latency of moving socket-to-socket in a modern server. PCIe has been around for many years, and CXL sits alongside PCIe Gen5 infrastructure (for CXL 1.1 and 2.0.) Putting memory onto the same physical lanes as PCIe Gen5, then adding a CXL controller on the other end, means that there will be additional latency versus traditional DDR interfaces.

Since we get that question often, it seemed worthwhile to just make a resource dedicated to that question. One tidbit that we have alluded to previously, but did not have a lot on, is the latency added by using CXL. This was an in-depth tutorial into things like the mechanisms of cache actions. HC34 Compute Express Link CXL Stack Latencies CoverĪt Hot Chips 34 (HC34) there was a tutorial on CXL.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed